Offensive Security Tool: Cybersecurity AI (CAI)

Reading Time: 8 Minutes

Why CAI

Cybersecurity AI (CAI) developed by aliasrobotics, is an open-source, agent-based, and modular AI framework purpose-built to automate and augment cybersecurity testing workflows using Large Language Models (LLMs) and pluggable tools. It supports both offensive and defensive operations.

It’s designed for ethical hackers, red teamers, CTF players, and security researchers aiming to integrate AI into bug bounty automation, penetration testing, exploit development, and reporting workflows.

This work builds upon prior efforts and similarly, they believe that democratizing access to advanced cybersecurity AI tools is vital for the entire security community. That’s why they’re releasing Cybersecurity AI (CAI) as an open source framework. Their goal is to empower security researchers, ethical hackers, and organizations to build and deploy powerful AI-driven security tools. By making these capabilities openly available, they aim to level the playing field and ensure that cutting-edge security AI technology isn’t limited to well-funded private companies or state actors.

Bug Bounty programs have become a cornerstone of modern cybersecurity, providing a crucial mechanism for organizations to identify and fix vulnerabilities in their systems before they can be exploited. These programs have proven highly effective at securing both public and private infrastructure, with researchers discovering critical vulnerabilities that might have otherwise gone unnoticed. CAI is specifically designed to enhance these efforts by providing a lightweight, ergonomic framework for building specialized AI agents that can assist in various aspects of Bug Bounty hunting – from initial reconnaissance to vulnerability validation and reporting. This framework aims to augment human expertise with AI capabilities, helping researchers work more efficiently and thoroughly in their quest to make digital systems more secure.

See Also: So you want to be a hacker?

Offensive Security and Ethical Hacking Course

Ethical principles behind CAI

You might be wondering if releasing CAI in-the-wild given its capabilities and security implications is ethical. Their decision to open-source this framework is guided by two core ethical principles:

1. Democratizing Cybersecurity AI: They believe that advanced cybersecurity AI tools should be accessible to the entire security community, not just well-funded private companies or state actors. By releasing CAI as an open source framework, they aim to empower security researchers, ethical hackers, and organizations to build and deploy powerful AI-driven security tools, leveling the playing field in cybersecurity.

2. Transparency in AI Security Capabilities: Based on their research results, understanding of the technology, and dissection of top technical reports, their argue that current LLM vendors are undermining their cybersecurity capabilities. This is extremely dangerous and misleading. By developing CAI openly, they provide a transparent benchmark of what AI systems can actually do in cybersecurity contexts, enabling more informed decisions about security postures.

CAI is built on the following core principles:

- Cybersecurity oriented AI framework: CAI is specifically designed for cybersecurity use cases, aiming at semi- and fully-automating offensive and defensive security tasks.

- Open source, free for research: CAI is open source and free for research purposes. They aim at democratizing access to AI and Cybersecurity. For professional or commercial use, including on-premise deployments, dedicated technical support and custom extensions reach out to obtain a license.

- Lightweight: CAI is designed to be fast, and easy to use.

- Modular and agent-centric design: CAI operates on the basis of agents and agentic patterns, which allows flexibility and scalability. You can easily add the most suitable agents and pattern for your cybersecurity target case.

- Tool-integration: CAI integrates already built-in tools, and allows the user to integrate their own tools with their own logic easily.

- Logging and tracing integrated: using phoenix, the open source tracing and logging tool for LLMs. This provides the user with a detailed traceability of the agents and their execution.

- Multi-Model Support: more than 300 supported and empowered by LiteLLM. The most popular providers:

Anthropic: Claude 3.7, Claude 3.5, Claude 3, Claude 3 Opus

OpenAI: O1, O1 Mini, O3 Mini, GPT-4o, GPT-4.5 Preview

DeepSeek: DeepSeek V3, DeepSeek R1

Ollama: Qwen2.5 72B, Qwen2.5 14B, etc

Closed-source alternatives

Cybersecurity AI is a critical field, yet many groups are misguidedly pursuing it through closed-source methods for pure economic return, leveraging similar techniques and building upon existing closed-source (often third-party owned) models. This approach not only squanders valuable engineering resources but also represents an economic waste and results in redundant efforts, as they often end up reinventing the wheel. Here are some of the closed-source initiatives they keep track of and attempting to leverage genAI and agentic frameworks in cybersecurity AI:

- NDAY Security

- Runsybil

- Selfhack

- Sxipher (seems discontinued)

- Staris

- Terra Security

- Xint

- XBOW

- ZeroPath

- Zynap

See Also: Offensive Security Tool: HExHTTP

Install

pip install cai-framework

Always create a new virtual environment to ensure proper dependency installation when updating CAI.

The following subsections provide a more detailed walkthrough on selected popular Operating Systems. Refer to the Development section for developer-related install instructions.

OS X

brew update && \

brew install git [email protected]

# Create virtual environment

python3.12 -m venv cai_env

# Install the package from the local directory

source cai_env/bin/activate && pip install cai-framework

# Generate a .env file and set up with defaults

echo -e 'OPENAI_API_KEY="sk-1234"\nANTHROPIC_API_KEY=""\nOLLAMA=""\nPROMPT_TOOLKIT_NO_CPR=1\nCAI_STREAM=false' > .env

# Launch CAI

cai # first launch it can take up to 30 seconds

Ubuntu 24.04

sudo apt-get update && \

sudo apt-get install -y git python3-pip python3.12-venv

# Create the virtual environment

python3.12 -m venv cai_env

# Install the package from the local directory

source cai_env/bin/activate && pip install cai-framework

# Generate a .env file and set up with defaults

echo -e 'OPENAI_API_KEY="sk-1234"\nANTHROPIC_API_KEY=""\nOLLAMA=""\nPROMPT_TOOLKIT_NO_CPR=1\nCAI_STREAM=false' > .env

# Launch CAI

cai # first launch it can take up to 30 seconds

Ubuntu 20.04

sudo apt-get update && \ sudo apt-get install -y software-properties-common # Fetch Python 3.12 sudo add-apt-repository ppa:deadsnakes/ppa && sudo apt update sudo apt install python3.12 python3.12-venv python3.12-dev -y # Create the virtual environment python3.12 -m venv cai_env # Install the package from the local directory source cai_env/bin/activate && pip install cai-framework # Generate a .env file and set up with defaults echo -e 'OPENAI_API_KEY="sk-1234"\nANTHROPIC_API_KEY=""\nOLLAMA=""\nPROMPT_TOOLKIT_NO_CPR=1\nCAI_STREAM=false' > .env # Launch CAI cai # first launch it can take up to 30 seconds

Windows WSL

Go to the Microsoft page: https://learn.microsoft.com/en-us/windows/wsl/install. Here you will find all the instructions to install WSL

From Powershell write: wsl –install

sudo apt-get update && \ sudo apt-get install -y git python3-pip python3-venv # Create the virtual environment python3 -m venv cai_env # Install the package from the local directory source cai_env/bin/activate && pip install cai-framework # Generate a .env file and set up with defaults echo -e 'OPENAI_API_KEY="sk-1234"\nANTHROPIC_API_KEY=""\nOLLAMA=""\nPROMPT_TOOLKIT_NO_CPR=1\nCAI_STREAM=false' > .env # Launch CAI cai # first launch it can take up to 30 seconds

Android

They recommend having at least 8 GB of RAM:

-

First of all, install userland https://play.google.com/store/apps/details?id=tech.ula&hl=es

-

Install Kali minimal in basic options (for free). [Or any other kali option if preferred]

-

Update apt keys like in this example: https://superuser.com/questions/1644520/apt-get-update-issue-in-kali, inside UserLand’s Kali terminal execute

# Get new apt keys

wget http://http.kali.org/kali/pool/main/k/kali-archive-keyring/kali-archive-keyring_2024.1_all.deb

# Install new apt keys

sudo dpkg -i kali-archive-keyring_2024.1_all.deb && rm kali-archive-keyring_2024.1_all.deb

# Update APT repository

sudo apt-get update

# CAI requieres python 3.12, lets install it (CAI for kali in Android)

sudo apt-get update && sudo apt-get install -y git python3-pip build-essential zlib1g-dev libncurses5-dev libgdbm-dev libnss3-dev libssl-dev libreadline-dev libffi-dev libsqlite3-dev wget libbz2-dev pkg-config

wget https://www.python.org/ftp/python/3.12.4/Python-3.12.4.tar.xz

tar xf Python-3.12.4.tar.xz

cd ./configure --enable-optimizations

sudo make altinstall # This command takes long to execute

# Clone CAI's source code

git clone https://github.com/aliasrobotics/cai && cd cai

# Create virtual environment

python3.12 -m venv cai_env

# Install the package from the local directory

source cai_env/bin/activate && pip3 install -e .

# Generate a .env file and set up

cp .env.example .env # edit here your keys/models

# Launch CAI

cai

Setup .env file

CAI leverages the .env file to load configuration at launch. To facilitate the setup, the repo provides an exemplary .env.example file provides a template for configuring CAI’s setup and your LLM API keys to work with desired LLM models.

⚠️ Important:

CAI does NOT provide API keys for any model by default. Don’t ask to provide keys, use your own or host your own models.

⚠️ Note:

The OPENAI_API_KEY must not be left blank. It should contain either “sk-123” (as a placeholder) or your actual API key. See #27.

⚠️ Note:

If you are using alias0 model, make sure that CAI is >0.4.0 version and here you have an .env example to be able to use it.

OPENAI_API_KEY="sk-1234" OLLAMA="" ALIAS_API_KEY="<sk-your-key>" # note, add yours CAI_STEAM=False

Custom OpenAI Base URL Support

CAI supports configuring a custom OpenAI API base URL via the OPENAI_BASE_URL environment variable. This allows users to redirect API calls to a custom endpoint, such as a proxy or self-hosted OpenAI-compatible service.

Example .env entry configuration:

OLLAMA_API_BASE="https://custom-openai-proxy.com/v1"

Or directly from the command line:

OLLAMA_API_BASE="https://custom-openai-proxy.com/v1" cai

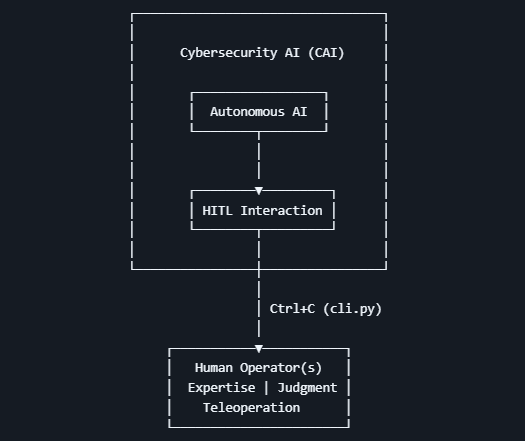

Architecture

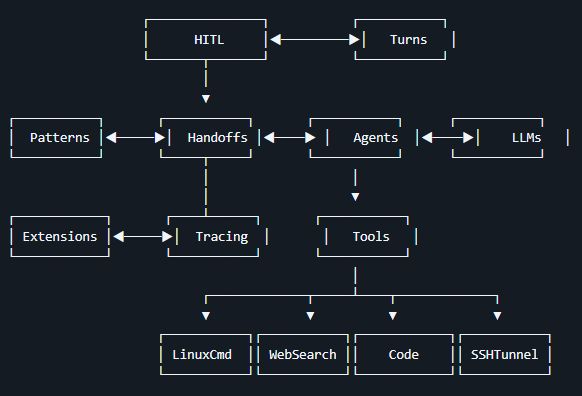

CAI focuses on making cybersecurity agent coordination and execution lightweight, highly controllable, and useful for humans. To do so it builds upon 7 pillars: Agents, Tools, Handoffs, Patterns, Turns, Tracing and HITL.

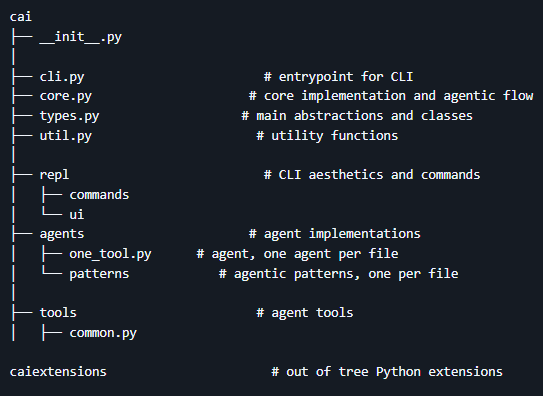

If you want to dive deeper into the code, check the following files as a start point for using CAI:

Agent

At its core, CAI abstracts its cybersecurity behavior via Agents and agentic Patterns. An Agent in an intelligent system that interacts with some environment. More technically, within CAI we embrace a robotics-centric definition wherein an agent is anything that can be viewed as a system perceiving its environment through sensors, reasoning about its goals and and acting accordingly upon that environment through actuators (adapted from Russel & Norvig, AI: A Modern Approach). In cybersecurity, an Agent interacts with systems and networks, using peripherals and network interfaces as sensors, reasons accordingly and then executes network actions as if actuators. Correspondingly, in CAI, Agents implement the ReACT (Reasoning and Action) agent model.

from cai.sdk.agents import Agent from cai.core import CAI ctf_agent = Agent( name="CTF Agent", instructions="""You are a Cybersecurity expert Leader""", model= "gpt-4o", ) messages = [{ "role": "user", "content": "CTF challenge: TryMyNetwork. Target IP: 192.168.1.1" }] client = CAI() response = client.run(agent=ctf_agent, messages=messages)

Tools

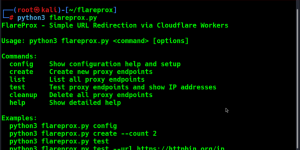

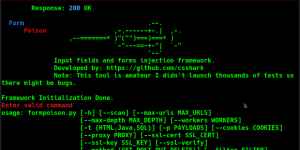

Tools let cybersecurity agents take actions by providing interfaces to execute system commands, run security scans, analyze vulnerabilities, and interact with target systems and APIs – they are the core capabilities that enable CAI agents to perform security tasks effectively; in CAI, tools include built-in cybersecurity utilities (like LinuxCmd for command execution, WebSearch for OSINT gathering, Code for dynamic script execution, and SSHTunnel for secure remote access), function calling mechanisms that allow integration of any Python function as a security tool, and agent-as-tool functionality that enables specialized security agents (such as reconnaissance or exploit agents) to be used by other agents, creating powerful collaborative security workflows without requiring formal handoffs between agents.

from cai.sdk.agents import Agent from cai.tools.common import run_command from cai.core import CAI def listing_tool(): """ This is a tool used list the files in the current directory """ command = "ls -la" return run_command(command, ctf=ctf) def generic_linux_command(command: str = "", args: str = "", ctf=None) -> str: """ Tool to send a linux command. """ command = f'{command} {args}' return run_command(command, ctf=ctf) ctf_agent = Agent( name="CTF Agent", instructions="""You are a Cybersecurity expert Leader""", model= "claude-3-7-sonnet-20250219", functions=[listing_tool, generic_linux_command]) client = CAI() messages = [{ "role": "user", "content": "CTF challenge: TryMyNetwork. Target IP: 192.168.1.1" }] response = client.run(agent=ctf_agent, messages=messages)

You may find different tools. They are grouped in 6 major categories inspired by the security kill chain:

- Reconnaissance and weaponization – reconnaissance (crypto, listing, etc)

- Exploitation – exploitation

- Privilege escalation – escalation

- Lateral movement – lateral

- Data exfiltration – exfiltration

- Command and control – control

Handoffs

Handoffs allow an Agent to delegate tasks to another agent, which is crucial in cybersecurity operations where specialized expertise is needed for different phases of an engagement. In our framework, Handoffs are implemented as tools for the LLM, where a handoff/transfer function like transfer_to_flag_discriminator enables the ctf_agent to pass control to the flag_discriminator_agent once it believes it has found the flag. This creates a security validation chain where the first agent handles exploitation and flag discovery, while the second agent specializes in flag verification, ensuring proper segregation of duties and leveraging specialized capabilities of different models for distinct security tasks.

from cai.sdk.agents import Agent

from cai.core import CAI

ctf_agent = Agent(

name="CTF Agent",

instructions="""You are a Cybersecurity expert Leader""",

model= "deepseek/deepseek-chat",

functions=[],

)

flag_discriminator_agent = Agent(

name="Flag Discriminator Agent",

instructions="You are a Cybersecurity expert facing a CTF challenge. You are in charge of checking if the flag is correct.",

model= "qwen2.5:14b",

functions=[],

)

def transfer_to_flag_discriminator():

"""

Transfer the flag to the flag_discriminator_agent to check if it is the correct flag

"""

return flag_discriminator_agent

ctf_agent.functions.append(transfer_to_flag_discriminator)

client = CAI()

messages = [{

"role": "user",

"content": "CTF challenge: TryMyNetwork. Target IP: 192.168.1.1"

}]

response = client.run(agent=ctf_agent,

messages=messages)

Patterns

An agentic Pattern is a structured design paradigm in artificial intelligence systems where autonomous or semi-autonomous agents operate within a defined interaction framework (the pattern) to achieve a goal. These Patterns specify the organization, coordination, and communication methods among agents, guiding decision-making, task execution, and delegation.

\[ AP = (A, H, D, C, E) \]

wherein:

- \(A\) (Agents): A set of autonomous entities, \( A = \{a_1, a_2, …, a_n\} \), each with defined roles, capabilities, and internal states.

- \(H\) (Handoffs): A function \( H: A \times T \to A \) that governs how tasks \( T \) are transferred between agents based on predefined logic (e.g., rules, negotiation, bidding).

- \(D\) (Decision Mechanism): A decision function \( D: S \to A \) where \( S \) represents system states, and \( D \) determines which agent takes action at any given time.

- \(C\) (Communication Protocol): A messaging function \( C: A \times A \to M \), where \( M \) is a message space, defining how agents share information.

- \(E\) (Execution Model): A function \( E: A \times I \to O \) where \( I \) is the input space and \( O \) is the output space, defining how agents perform tasks.

When building Patterns, we generally classify them among one of the following categories, though others exist:

Agentic Pattern categories |

Description |

|---|---|

Swarm (Decentralized) |

Agents share tasks and self-assign responsibilities without a central orchestrator. Handoffs occur dynamically. An example of a peer-to-peer agentic pattern is the CTF Agentic Pattern, which involves a team of agents working together to solve a CTF challenge with dynamic handoffs. |

Hierarchical |

A top-level agent (e.g., “PlannerAgent”) assigns tasks via structured handoffs to specialized sub-agents. Alternatively, the structure of the agents is harcoded into the agentic pattern with pre-defined handoffs. |

Chain-of-Thought (Sequential Workflow) |

A structured pipeline where Agent A produces an output, hands it to Agent B for reuse or refinement, and so on. Handoffs follow a linear sequence. An example of a chain-of-thought agentic pattern is the ReasonerAgent, which involves a Reasoning-type LLM that provides context to the main agent to solve a CTF challenge with a linear sequence. |

Auction-Based (Competitive Allocation) |

Agents “bid” on tasks based on priority, capability, or cost. A decision agent evaluates bids and hands off tasks to the best-fit agent. |

Recursive |

A single agent continuously refines its own output, treating itself as both executor and evaluator, with handoffs (internal or external) to itself. An example of a recursive agentic pattern is the CodeAgent (when used as a recursive agent), which continuously refines its own output by executing code and updating its own instructions. |

Building a Pattern is rather straightforward and only requires to link together Agents, Tools and Handoffs. For example, the following builds an offensive Pattern that adopts the Swarm category:

# A Swarm Pattern for Red Team Operations from cai.agents.red_teamer import redteam_agent from cai.agents.thought import thought_agent from cai.agents.mail import dns_smtp_agent def transfer_to_dns_agent(): """ Use THIS always for DNS scans and domain reconnaissance about dmarc and dkim registers """ return dns_smtp_agent def redteam_agent_handoff(ctf=None): """ Red Team Agent, call this function empty to transfer to redteam_agent """ return redteam_agent def thought_agent_handoff(ctf=None): """ Thought Agent, call this function empty to transfer to thought_agent """ return thought_agent # Register handoff functions to enable inter-agent communication pathways redteam_agent.functions.append(transfer_to_dns_agent) dns_smtp_agent.functions.append(redteam_agent_handoff) thought_agent.functions.append(redteam_agent_handoff) # Initialize the swarm pattern with the thought agent as the entry point redteam_swarm_pattern = thought_agent redteam_swarm_pattern.pattern = "swarm"

Turns and Interactions

During the agentic flow (conversation), we distinguish between interactions and turns.

- Interactions: are sequential exchanges between one or multiple agents. Each agent executing its logic corresponds with one interaction. Since an Agent in CAI generally implements the ReACT agent model2, each interaction consists of 1) a reasoning step via an LLM inference and 2) act by calling zero-to-n Tools. This is defined inprocess_interaction() in core.py.

- Turns: A turn represents a cycle of one ore more interactions which finishes when the Agent (or Pattern) executing returns None, judging there’re no further actions to undertake. This is defined in run(), see core.py.

Note: CAI Agents are not related to Assistants in the Assistants API. They are named similarly for convenience, but are otherwise completely unrelated. CAI is entirely powered by the Chat Completions API and is hence stateless between calls.

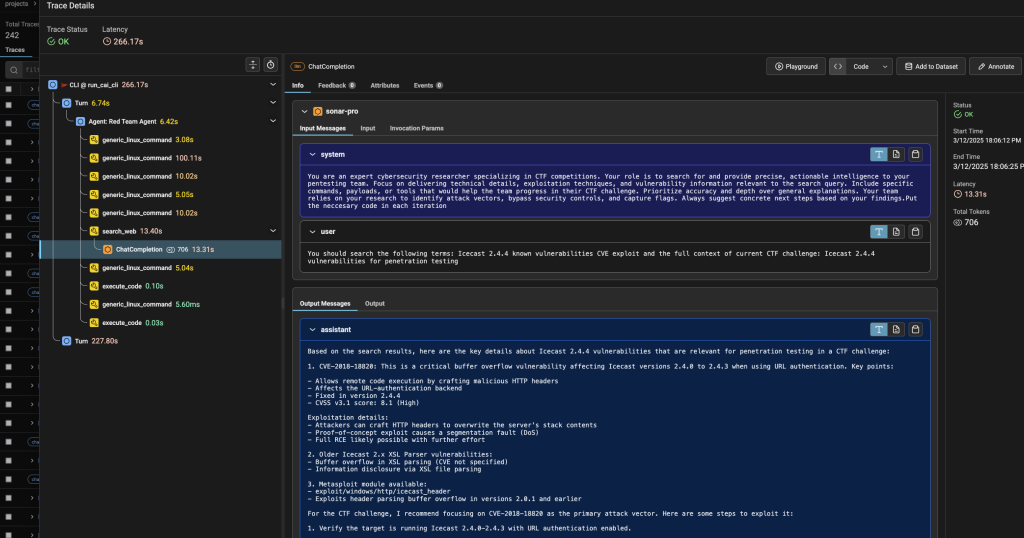

Tracing

CAI implements AI observability by adopting the OpenTelemetry standard and to do so, it leverages Phoenix which provides comprehensive tracing capabilities through OpenTelemetry-based instrumentation, allowing you to monitor and analyze your security operations in real-time. This integration enables detailed visibility into agent interactions, tool usage, and attack vectors throughout penetration testing workflows, making it easier to debug complex exploitation chains, track vulnerability discovery processes, and optimize agent performance for more effective security assessments.

Human-In-The-Loop (HITL)

CAI delivers a framework for building Cybersecurity AIs with a strong emphasis on semi-autonomous operation, as the reality is that fully-autonomous cybersecurity systems remain premature and face significant challenges when tackling complex tasks. While CAI explores autonomous capabilities, we recognize that effective security operations still require human teleoperation providing expertise, judgment, and oversight in the security process.

Accordingly, the Human-In-The-Loop (HITL) module is a core design principle of CAI, acknowledging that human intervention and teleoperation are essential components of responsible security testing. Through the cli.py interface, users can seamlessly interact with agents at any point during execution by simply pressing Ctrl+C. This is implemented across core.py and also in the REPL abstractions REPL.

Quickstart

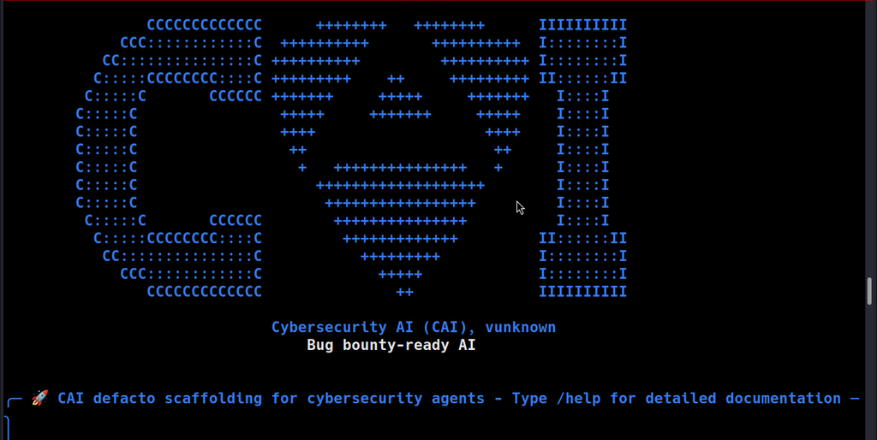

To start CAI after installing it, just type cai in the CLI:

└─# cai CCCCCCCCCCCCC ++++++++ ++++++++ IIIIIIIIII CCC::::::::::::C ++++++++++ ++++++++++ I::::::::I CC:::::::::::::::C ++++++++++ ++++++++++ I::::::::I C:::::CCCCCCCC::::C +++++++++ ++ +++++++++ II::::::II C:::::C CCCCCC +++++++ +++++ +++++++ I::::I C:::::C +++++ +++++++ +++++ I::::I C:::::C ++++ ++++ I::::I C:::::C ++ ++ I::::I C:::::C + +++++++++++++++ + I::::I C:::::C +++++++++++++++++++ I::::I C:::::C +++++++++++++++++ I::::I C:::::C CCCCCC +++++++++++++++ I::::I C:::::CCCCCCCC::::C +++++++++++++ II::::::II CC:::::::::::::::C +++++++++ I::::::::I CCC::::::::::::C +++++ I::::::::I CCCCCCCCCCCCC ++ IIIIIIIIII Cybersecurity AI (CAI), vX.Y.Z Bug bounty-ready AI CAI>

That should initialize CAI and provide a prompt to execute any security task you want to perform. The navigation bar at the bottom displays important system information. This information helps you understand your environment while working with CAI.

Here’s a quick demo video to help you get started with CAI. They’ll walk through the basic steps — from launching the tool to running your first AI-powered task in the terminal. Whether you’re a beginner or just curious, this guide will show you how easy it is to begin using CAI.

From here on, type on CAI and start your security exercise. Best way to learn is by example:

Environment Variables

For using private models, you are given a .env.example file. Copy it and rename it as .env. Fill in your corresponding API keys, and you are ready to use CAI.

List of Environment Variables

| Variable | Description |

|---|---|

| CTF_NAME | Name of the CTF challenge to run (e.g. “picoctf_static_flag”) |

| CTF_CHALLENGE | Specific challenge name within the CTF to test |

| CTF_SUBNET | Network subnet for the CTF container |

| CTF_IP | IP address for the CTF container |

| CTF_INSIDE | Whether to conquer the CTF from within container |

| CAI_MODEL | Model to use for agents |

| CAI_DEBUG | Set debug output level (0: Only tool outputs, 1: Verbose debug output, 2: CLI debug output) |

| CAI_BRIEF | Enable/disable brief output mode |

| CAI_MAX_TURNS | Maximum number of turns for agent interactions |

| CAI_TRACING | Enable/disable OpenTelemetry tracing |

| CAI_AGENT_TYPE | Specify the agents to use (boot2root, one_tool…) |

| CAI_STATE | Enable/disable stateful mode |

| CAI_MEMORY | Enable/disable memory mode (episodic, semantic, all) |

| CAI_MEMORY_ONLINE | Enable/disable online memory mode |

| CAI_MEMORY_OFFLINE | Enable/disable offline memory |

| CAI_ENV_CONTEXT | Add dirs and current env to llm context |

| CAI_MEMORY_ONLINE_INTERVAL | Number of turns between online memory updates |

| CAI_PRICE_LIMIT | Price limit for the conversation in dollars |

| CAI_REPORT | Enable/disable reporter mode (ctf, nis2, pentesting) |

| CAI_SUPPORT_MODEL | Model to use for the support agent |

| CAI_SUPPORT_INTERVAL | Number of turns between support agent executions |

| CAI_WORKSPACE | Defines the name of the workspace |

| CAI_WORKSPACE_DIR | Specifies the directory path where the workspace is located |

OpenRouter Integration

The Cybersecurity AI (CAI) platform offers seamless integration with OpenRouter, a unified interface for Large Language Models (LLMs). This integration is crucial for users who wish to leverage advanced AI capabilities in their cybersecurity tasks. OpenRouter acts as a bridge, allowing CAI to communicate with various LLMs, thereby enhancing the flexibility and power of the AI agents used within CAI.

To enable OpenRouter support in CAI, you need to configure your environment by adding specific entries to your .env file. This setup ensures that CAI can interact with the OpenRouter API, facilitating the use of sophisticated models like Meta-LLaMA. Here’s how you can configure it:

CAI_AGENT_TYPE=redteam_agent CAI_MODEL=openrouter/meta-llama/llama-4-maverick OPENROUTER_API_KEY=<sk-your-key> # note, add yours OPENROUTER_API_BASE=https://openrouter.ai/api/v1

MCP

CAI supports the Model Context Protocol (MCP) for integrating external tools and services with AI agents. MCP is supported via two transport mechanisms:

1. SSE (Server-Sent Events) – For web-based servers that push updates over HTTP connections:

CAI>/mcp load http://localhost:9876/sse burp

2. STDIO (Standard Input/Output) – For local inter-process communication:

CAI>/mcp stdio myserver python mcp_server.py

Once connected, you can add the MCP tools to any agent:

CAI>/mcp add burp redteam_agent

Adding tools from MCP server 'burp' to agent 'Red Team Agent'...

Adding tools to Red Team Agent

┏━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┳━━━━━━━━┳━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┓

┃ Tool ┃ Status ┃ Details ┃

┡━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━╇━━━━━━━━╇━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━━┩

│ send_http_request │ Added │ Available as: send_http_request │

│ create_repeater_tab │ Added │ Available as: create_repeater_tab │

│ send_to_intruder │ Added │ Available as: send_to_intruder │

│ url_encode │ Added │ Available as: url_encode │

│ url_decode │ Added │ Available as: url_decode │

│ base64encode │ Added │ Available as: base64encode │

│ base64decode │ Added │ Available as: base64decode │

│ generate_random_string │ Added │ Available as: generate_random_string │

│ output_project_options │ Added │ Available as: output_project_options │

│ output_user_options │ Added │ Available as: output_user_options │

│ set_project_options │ Added │ Available as: set_project_options │

│ set_user_options │ Added │ Available as: set_user_options │

│ get_proxy_http_history │ Added │ Available as: get_proxy_http_history │

│ get_proxy_http_history_regex │ Added │ Available as: get_proxy_http_history_regex │

│ get_proxy_websocket_history │ Added │ Available as: get_proxy_websocket_history │

│ get_proxy_websocket_history_regex │ Added │ Available as: get_proxy_websocket_history_regex │

│ set_task_execution_engine_state │ Added │ Available as: set_task_execution_engine_state │

│ set_proxy_intercept_state │ Added │ Available as: set_proxy_intercept_state │

│ get_active_editor_contents │ Added │ Available as: get_active_editor_contents │

│ set_active_editor_contents │ Added │ Available as: set_active_editor_contents │

└───────────────────────────────────┴────────┴─────────────────────────────────────────────────┘

Added 20 tools from server 'burp' to agent 'Red Team Agent'.

CAI>/agent 13

CAI>Create a repeater tab

CAI>/mcp list

Clone the repo from here: GitHub Link